Have you ever wondered why a RAW photo looks different from the scene you remember? Why do the colors feel off, or the shadows seem flat, when you first open a file?

The answer lies in how cameras capture light and how software translates those measurements into images.

This post explains, in clear and engaging language, the process of demosaicing, what linear light means, and how human vision differs from camera sensors. By understanding these concepts, you will gain deeper control over your editing workflow and get images that feel truer to your experience.

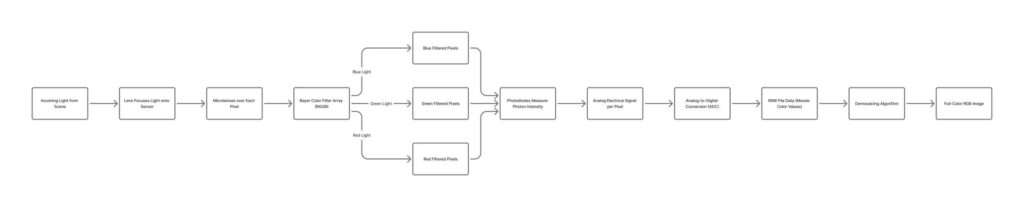

How Camera Sensors Capture Light

Digital cameras rely on sensors to measure light. Each sensor photosite records the intensity of light hitting it, filtered through a red, green, or blue filter.

This means that every pixel in a RAW file only knows one color value.

Unlike film, sensors are linear—they record light proportionally. Twice the light hitting a photosite equals twice the value in the RAW data. This preserves the full dynamic range of a scene, but it also means that the RAW file is not yet a photograph in the traditional sense.

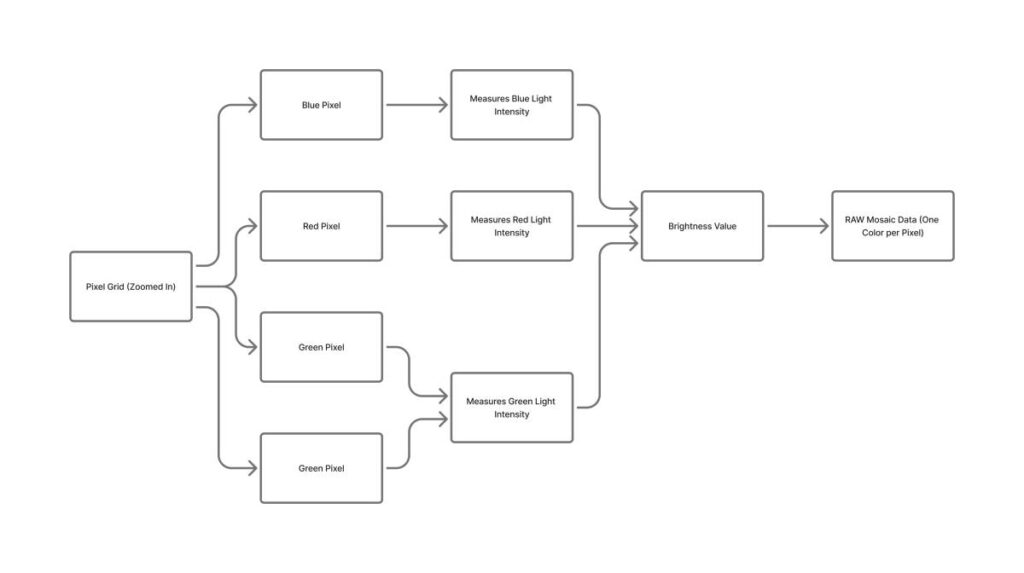

The Bayer Filter and Its Role

Most cameras use a Bayer filter, which overlays red, green, and blue filters across the sensor in a repeating pattern.

Green appears twice as often as red or blue because human vision is more sensitive to luminance.

This filter pattern is fixed for every image. Because each pixel only has one color value, software must reconstruct the missing colors to produce a full-color image.

This process is called demosaicing.

Most cameras use a Bayer Filter; others, like FujiFilm, do not. Would you like an article explaining what sensors different camera manufacturers use?

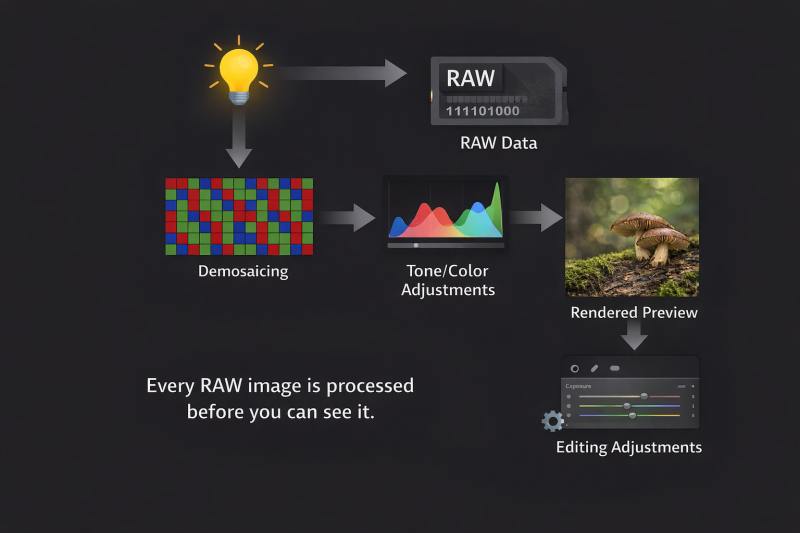

What Demosaicing Does

Demosaicing fills in the missing color values for each pixel.

Software uses nearby pixels to estimate the colors that were not recorded by a particular photosite.

They affect how sharp edges appear, how color transitions are handled, and how fine textures like moss or leaves are represented.

Demosaicing is the first step where interpretation enters the image. The algorithms behind this are complex and vary between programs.

Different software will make different choices, which is why the same RAW file can look different in Lightroom, Capture One, DxO, or Luminar Neo (new article on this soon!).

In short, Demosaicing is not an exacting technical procedure — it is an interpretive act.

Understanding Linear Light

Linear light refers to RAW sensor data before any gamma encoding or tone adjustments.

It is proportional to the actual light in the scene.

Editing in linear light has advantages: you can recover highlights smoothly, lift shadows without introducing unnatural color shifts, and apply tone adjustments that maintain the natural relationship between brightness values.

Remember, there is no such thing as a RAW image; only a file. The data needs to be processed first.

When RAW is converted to a display-referred image with gamma encoding, those adjustments become less flexible because the relationship between light intensity and pixel values is no longer linear.

Camera Sensors vs Human Vision

The human eye does not see linearly. It adapts to brightness and color dynamically.

Your eye is constantly re-exposing, re-white-balancing, and tone-mapping the world in real time — without your permission. A camera can’t do this.

We perceive contrast and detail in dark areas differently from how cameras record them.

Our eyes and brain work more like a video camera than a still image shot. But the camera does a better job of retaining the exact details, while our brain may embellish what we saw.

Cameras, in contrast, measure light with fixed sensitivity and apply no adaptation. This is why two cameras might record the same scene with identical exposure, yet one RAW file might look flat and the other more contrasty after demosaicing.

When you stood at the lake that morning, you could see texture in the fog, color in the trees, and detail in the shadows simultaneously. The camera could not it could only record the light value and rely on software to interpret nearby pixel values, and should I say “guess” at the color and tone?

Software attempts to mimic human perception through tone mapping, gamma encoding, and color profiles, but it is an interpretation, not a direct replication.

Here is a comparison between Camera Sensors and what the Human Eye can do.

Cameras are better light meters than eyes

A camera sensor:

- Measures photons linearly

- Counts light objectively

- Treats every photon the same

- Has no awareness of context, meaning, or importance

If twice as many photons hit a photosite, the value doubles. Period.

In that sense, a sensor is far better than the human eye at:

- Detecting small differences in brightness

- Preserving highlight data

- Recording wide dynamic range in a single, unbiased way

A sensor is brutally honest.

Human eyes are terrible light meters (on purpose)

Your eyes:

- Do not see linearly

- Do not measure absolute brightness

- They are constantly adapting exposure, white balance, and contrast

- Throw away enormous amounts of physical light data

But that’s not a flaw — it’s a feature.

Your visual system prioritizes:

- Local contrast over absolute brightness

- Edges and structure over uniform areas

- Important objects over accurate measurement

- Memory and expectation over raw data

You don’t see photons.

You see relationships.

The key difference: cameras measure, humans interpret

This is the sentence your article is circling but hasn’t quite landed yet:

A camera records light.

A human constructs a scene.

Your brain:

- Compresses dynamic range automatically

- Boosts shadow detail selectively

- Maintains color constancy under wildly different lighting

- Rebuilds missing information without telling you

It’s doing tone mapping, demosaicing, noise reduction, and sharpening in real time, guided by evolutionary survival and experience — not physics.

Get an email when new articles are published

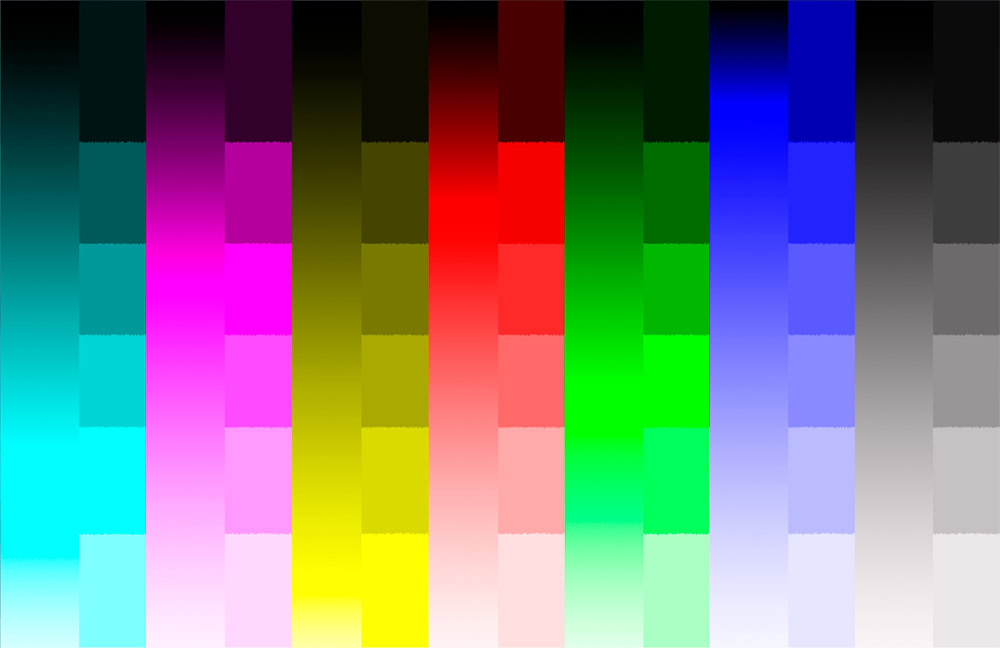

Here is an Example

In this image, the color and tone of each block are the same, but depending on the tone of the neighboring block, the color may look darker or lighter. Our brain interprets the colors differently from what they actually are.

Why RAW files feel “worse” than memory

RAW data often looks:

- Flat

- Dark

- Low contrast

- Muted in color

Not because it’s inferior — but because it’s:

- Linear

- Uninterpreted

- Missing the perceptual tricks your brain applied on location

In other words:

RAW looks wrong because it hasn’t been humanized yet.

Why Software Interpretation Matters

Every step from demosaicing to final rendering is interpretive.

Tone curves, color matrices, and sharpening algorithms all influence the final look. This is why the same RAW file looks different in different programs and why the default look matters.

Understanding this helps you make informed choices: you are not uncovering a hidden truth in the RAW file.

You are choosing a method that translates sensor data into a pleasing image that matches your memory of the scene.

I have written an article that explains the differences between editing software, including Lightroom/ACR, Capture One, DxO Photolab, and Luminar Neo. You can read the article by clicking this link: Comparing Editing Software.

Choosing the Right RAW Processor

Because demosaicing algorithms vary, software choice impacts your results.

Lightroom favors balanced interpretation; Capture One emphasizes detail; DxO prioritizes noise reduction and optical correction; Luminar Neo emphasizes stylistic enhancements.

Recognizing this helps you pick the processor that aligns with your vision and the type of image you want to produce.

The paradox (and this is important for photographers)

- Cameras are better at capturing light

- Humans are better at deciding what that light means

Editing is not about making photos more accurate to the sensor.

It’s about making them more faithful to human perception and memory.

That’s why:

- Tone curves exist

- Gamma exists

- Demosaicing exists

- Color profiles exist

They’re not fixing a broken sensor.

They’re translating a measurement into an experience.

Human vision is inefficient at measuring light — and extraordinarily efficient at turning light into meaning.